When you “vibe-code” a signup form, what does the AI produce? It learned from the internet. And the internet is full of manipulative UX and “dark patterns”.

This isn’t hypothetical. AI coding tools suggest button copy, form layouts, modal dialogs. They’ve been trained on millions of websites, many of which use dark patterns. The model doesn’t know which patterns are ethical and which are manipulative. It just knows what’s common.

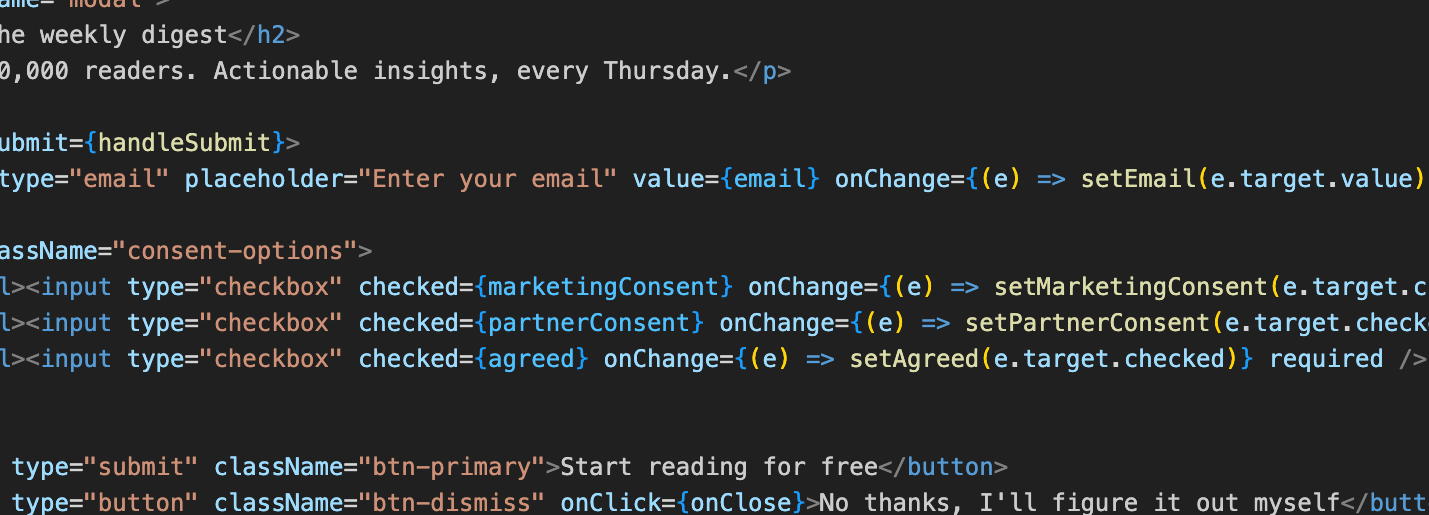

So you prompt for “a newsletter signup popup” and get one with a dismiss button that says “No thanks, I prefer to stay uninformed and want to kick puppies” You didn’t ask for that. But you got it, because that’s what the training data looked like.

This isn’t a new thing – developers have been copying dark patterns without thinking for years, long before AI got involved. “That’s just what everyone else does” is a phrase older than the invention of computers. Pre-ticked checkboxes, hidden unsubscribe links, confirm-shaming button labels… these spread because developers saw them elsewhere and assumed they were normal.

AI can accelerate this. Instead of copying from one site you happened to visit, you’re now drawing on patterns distilled from the entire internet. The manipulative designs that worked well enough to become common are now baked into the model’s sense of what a “normal” signup flow looks like.

The developer might not even notice. That’s the thing about “vibe coding” – you’re trusting the output. You’re not scrutinising every label, every default, every pre-selected option. A guilt-trip button label or a pre-ticked marketing consent checkbox slips through because looks normal.

But someone will notice. At best, a user. Or more problematic for you, a regulator. In the “wild west” days of the internet, it was irritating for your users, but now “dark patterns” increasingly come with fines attached:

TikTok was fined €345 million1 for using dark patterns to nudge child users towards privacy-invasive settings. Google was fined €325 million2 by France’s CNIL for designing its account creation flow so that refusing advertising cookies was harder than accepting them. The FTC fined Epic Games $245 million3 for dark patterns in Fortnite. GDPR requires freely given consent and the EU’s Digital Services Act explicitly addresses deceptive design.

But your favourite AI was trained on the web as it exists in reality. That includes what these companies were doing before they were fined and forced to change, what companies that haven’t been fined yet are doing and what companies are doing in locations where the rules just don’t exist in the same way. The training data doesn’t come with a label saying “this design resulted in a nine-figure fine” or “this one is from a country with no consumer protection laws”. It doesn’t have an opinion on what’s manipulative and what isn’t. It just knows what patterns are common.

And “the AI did it” is not a defence anyone has tried, because it wouldn’t work. You shipped it and your name is on it. How the code got written is your problem.

There’s also the optimisation trap. If you prompt for “a high-converting signup form,” you might get exactly what you asked for. Including potentially dishonest or unethical tricks that make it convert. The AI doesn’t know you didn’t want the manipulation, only the results.

This is where intent and outcome diverge. The developer didn’t choose a dark pattern. But the user still experiences one. Does intent matter to the person being manipulated? Does it matter to the regulator?

This is very much not an “AI problem”. Humans have been designing manipulative interfaces and copy since writing was invented. But AI makes it easier to do accidentally. The patterns are already in the training data, waiting to be reproduced by someone who never explicitly chose them.

If you’re using AI in your workflow, you need to actually look at what you’ve built. Not just whether it works, but what it’s doing. What does the button say? What’s pre-selected? What’s the path to opting out?

These questions have always mattered. AI just made them easier to overlook.

- ¹ Irish Data Protection Commission, “Data Protection Commission announces €345 million fine of TikTok,” 15 September 2023.

↩︎ - ³ CNIL, “Cookies and advertisements inserted between emails: Google fined 325 million euros by the CNIL,” 1 September 2025.

↩︎ - ⁴ Federal Trade Commission, “Fortnite Video Game Maker Epic Games to Pay More Than Half a Billion Dollars over FTC Allegations of Privacy Violations and Unwanted Charges,” December 2022.

↩︎